- What Does "Crawled - Currently Not Indexed" Actually Mean?

- The Three-Stage Journey Every URL Must Complete

- Every Root Cause of Crawled - Currently Not Indexed (And Its Specific Fix)

- Deep-Dive Diagnostics: How to Identify and Fix Each Cause

- Fix 1: Thin Content and Low Content Value

- Fix 2: Duplicate and Near-Duplicate Content

- Fix 3: Weak Internal Linking — The Orphan Page Problem

- Fix 4: Noindex Tags and Robots.txt Conflicts

- Fix 5: Crawl Budget Exhaustion on Large Sites

- Fix 6: Page Speed and Core Web Vitals Failures

- Fix 7: Search Intent Mismatch

- Fix 8: JavaScript Rendering Issues

- Crawled - Currently Not Indexed vs Discovered - Currently Not Indexed: Key Differences

- The Complete Step-by-Step Fix Checklist for Crawled - Currently Not Indexed

- When You Should NOT Fix Crawled - Currently Not Indexed

- The Fastest Way to Reindex Fixed Pages: SpeedIndex Pro and Google's Official Indexing API

- The Exact Workflow: Fix and Reindex in Under 10 Minutes

- Why SpeedIndex Pro Works Where Manual GSC Requests Fall Short

- No Google Search Console Login Required

- Preventing Crawled - Currently Not Indexed From Reoccurring

- Publish a Quality Threshold Before Every Post

- Build Internal Links on the Same Day You Publish

- Submit New URLs Through SpeedIndex Pro on Publication

- Audit Your GSC Excluded Pages Report Monthly

- Keep Crawl Budget Clean on Large Sites

- Frequently Asked Questions

- How long does it take for Google to reindex a fixed page?

- Can I just click Request Indexing in Google Search Console?

- Will my page rank immediately after being indexed?

- My page has been fixed for weeks but is still not indexed — what do I do?

- How many pages showing this status is normal?

- Does building backlinks help fix this status?

- What is the difference between deindexed and crawled not indexed?

- Fix Your Indexing Issues Today — Then Submit With SpeedIndex Pro

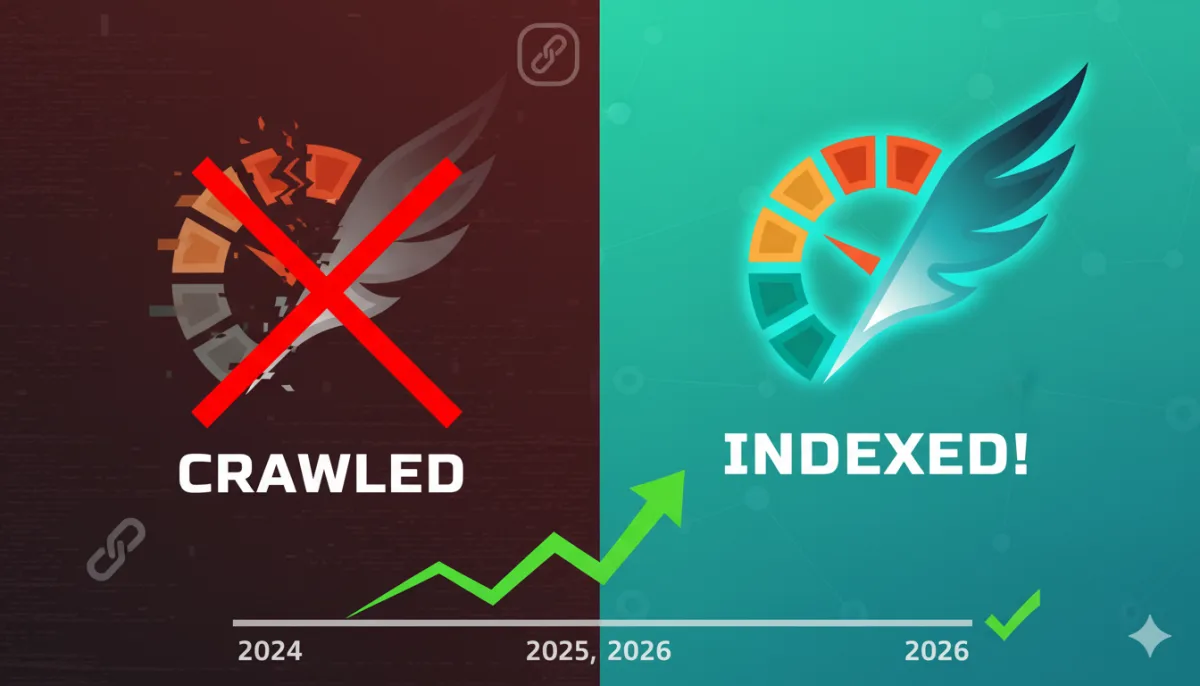

You open Google Search Console, navigate to the Pages report, and there it is. A long list of URLs sitting under the status "Crawled - currently not indexed." Pages you spent hours writing. Product pages you need driving revenue. Backlinks you built for SEO campaigns. All of them visited by Googlebot — and all of them deliberately excluded from Google's index. Invisible to every search query. Generating zero organic traffic.

This is one of the most common, most frustrating, and most misunderstood indexing errors in all of SEO. And it is one that website owners, bloggers, e-commerce operators, and SEO professionals encounter constantly — often without a clear understanding of why it is happening or what the fastest, most reliable path to resolution actually looks like.

This guide covers everything. What the error means precisely. Why Google applies it. Every root cause with its specific fix. The difference between this status and "Discovered - currently not indexed." When you should not fix it. And the fastest method available to trigger reindexing once your fixes are in place — using Google's own official Indexing API through SpeedIndex Pro.

|

What this guide covers: Root causes of Crawled - Currently Not Indexed | Full diagnostic checklist | 12 specific fixes | Difference vs Discovered - Currently Not Indexed | When NOT to fix it | Fastest reindexing method available in 2026 |

What Does "Crawled - Currently Not Indexed" Actually Mean?

"Crawled - currently not indexed" is a status that appears in the Pages report of Google Search Console under the Excluded category. It means Google's Googlebot visited and read the page — the crawl was successful — but Google then made a deliberate decision not to add that page to its search index.

This distinction is critical and widely misunderstood. The page is not blocked. It is not inaccessible. Googlebot physically visited it, processed its content, and then Google's indexing systems evaluated what it found and concluded: this page does not currently meet the bar for inclusion in search results.

Because the page is excluded from the index, it cannot appear in any Google search result for any query. It is not ranking on page 10 — it is simply absent. No impressions, no clicks, no organic visibility of any kind.

The Three-Stage Journey Every URL Must Complete

1. Crawling — Googlebot discovers the URL, visits it, and reads the page content

2. Indexing — Google processes what it found, evaluates quality and relevance, and adds the page to its searchable database

3. Ranking — the indexed page becomes eligible to appear in search results when relevant queries are made

Crawled - currently not indexed means the URL completed stage one but failed at stage two. The page is permanently invisible in search until the underlying reason for indexing refusal is identified and corrected.

|

Critical distinction: A page with this status has been EVALUATED by Google and actively rejected — not merely overlooked. This means passive waiting is almost never the right response. Google has seen the page and decided it is not worth indexing at its current quality level. |

Every Root Cause of Crawled - Currently Not Indexed (And Its Specific Fix)

Google does not publicly publish a detailed ruleset explaining exactly when it applies this status. However, years of industry research, large-scale site audits, and documented testing have identified the causes that trigger it most consistently. Here is every major root cause with its diagnosis and resolution.

|

Root Cause |

Why Google Skips the Page |

The Fix |

|

Thin or low-value content |

Google deems page insufficiently useful to users |

Expand, deepen, and improve content quality significantly |

|

Duplicate or near-duplicate content |

Google chooses to index one version and excludes others |

Consolidate pages, set correct canonical tags, remove duplicates |

|

Weak or zero internal links |

Page appears orphaned — Google sees no value signal |

Add relevant internal links from high-authority pages on your site |

|

Noindex tag present |

Page explicitly tells Google not to index it |

Remove noindex meta tag or x-robots-tag from the page |

|

Blocked by robots.txt |

Googlebot is disallowed from crawling the URL |

Update robots.txt to allow crawling of this URL |

|

Poor crawl budget allocation |

Google deprioritises page due to low site-wide authority |

Improve site authority via backlinks, reduce crawl waste on low-value URLs |

|

Slow page load / server errors |

Googlebot abandons crawl before reading full content |

Improve Core Web Vitals, fix server errors, reduce page load time |

|

Redirect chain issues |

Multi-hop redirects confuse Googlebot and burn crawl budget |

Reduce redirect chains to a single 301 hop where possible |

|

Search intent mismatch |

Content format does not match what Google expects for the query |

Rewrite to match dominant content format ranking for the target keyword |

|

New domain / low authority |

Google has not yet established trust in the domain |

Build quality backlinks, publish consistently, submit sitemap to GSC |

|

Excessive URL parameters |

Parameter variations create near-duplicate URL sprawl |

Canonicalise parameter URLs or disallow in GSC URL Parameters tool |

|

JavaScript rendering failure |

Googlebot cannot fully render JS-heavy content |

Implement server-side rendering or ensure critical content is in HTML |

Table note: Multiple causes can apply simultaneously. Run through every diagnosis below before concluding which fixes your specific pages require.

Deep-Dive Diagnostics: How to Identify and Fix Each Cause

Fix 1: Thin Content and Low Content Value

Content quality is the most frequently cited reason behind this status. When Google crawls a page and determines that the content provides insufficient value to users — relative to what is already indexed for those queries — it excludes the page from the index.

Thin content includes pages with very low word counts, pages that simply restate information available everywhere else without adding original insight, product descriptions that are manufacturer copy-pasted across multiple sites, and category pages with no descriptive text beyond a product grid.

The fix is substantive content improvement, not padding. Research the top 5 pages currently ranking for your target keyword. Identify what they cover that you do not. Add original analysis, expert perspective, data, examples, or firsthand experience that competitors lack. Google's quality systems increasingly reward E-E-A-T signals — Experience, Expertise, Authoritativeness, and Trustworthiness — and thin pages score poorly on all four dimensions.

Fix 2: Duplicate and Near-Duplicate Content

When Google detects that a page substantially duplicates content found elsewhere on your own site or across the web, it typically indexes the version it considers canonical and excludes the rest. This affects e-commerce sites with product variants, paginated archives, URL parameter variations, and sites that have republished content from other sources.

Run a site audit to identify duplicate content. Use canonical tags to indicate which version of a page Google should index. For URL parameter variations that produce near-identical content, consolidate them or disallow parameters via the URL Parameters tool in Google Search Console. For external duplication issues, rewrite the content to be genuinely original.

Fix 3: Weak Internal Linking — The Orphan Page Problem

A page that receives zero internal links from other pages on your site is called an orphan page. Orphan pages are among the most common causes of this indexing status. Without internal links pointing to a page, Google receives no signal that the page is important, relevant, or part of a meaningful content structure. It is effectively hidden — accessible by URL but invisible to the crawl path.

To fix orphan pages, identify which of your existing pages already perform well in search and are topically related to the non-indexed page. Add contextual internal links from those pages to the excluded URL. Use descriptive anchor text that reflects the target page's topic. Even a single strong internal link from an authoritative page on your site can dramatically change how Google evaluates the linked page's importance.

Fix 4: Noindex Tags and Robots.txt Conflicts

This is the most immediately fixable cause and the most embarrassing to discover. If a page carries a meta robots noindex tag or is disallowed in your robots.txt file, Google will crawl it and then immediately exclude it from the index — exactly as instructed.

Check every affected URL using the URL Inspection Tool in Google Search Console. Look for a noindex directive in the rendered HTML. Check your robots.txt file at yourdomain.com/robots.txt for any disallow rules that might apply to the URL pattern. If either is found, remove the restriction, validate the fix, and resubmit the URL for indexing.

Fix 5: Crawl Budget Exhaustion on Large Sites

For websites with thousands or tens of thousands of pages, crawl budget becomes a critical limiting factor. Google allocates a finite number of crawl visits to each domain per day. If your site wastes crawl budget on low-value URLs — parameter variations, faceted navigation combinations, empty tag archive pages, session ID URLs — Googlebot may deprioritise or never visit your most important content pages.

Conduct a crawl budget audit using Google Search Console's crawl stats report. Identify URL patterns that generate high crawl volume but low indexing rates. Disallow low-value URL patterns via robots.txt. Consolidate thin archive and tag pages. Reduce redirect chains, which consume extra crawl budget on every hop. Improving crawl budget efficiency is particularly impactful for e-commerce sites with large, dynamic product catalogues.

Fix 6: Page Speed and Core Web Vitals Failures

If your page loads slowly, throws server errors, or fails to render completely before Googlebot's timeout threshold, the crawler may abandon the visit before reading all of your content. Pages that are partially crawled due to performance issues are more likely to receive low quality evaluations and fail indexing.

Run your affected URLs through Google's PageSpeed Insights and Core Web Vitals report in Search Console. Prioritise fixes to Largest Contentful Paint (LCP) and Cumulative Layout Shift (CLS) scores. Ensure your server response time is under 200ms. Fix any 5xx server errors visible in the crawl stats report.

Fix 7: Search Intent Mismatch

Google's indexing systems evaluate not just content quality but content format alignment with search intent. If the top-ranking pages for your target keyword are all listicles and how-to guides but your page is formatted as a long-form opinion piece, Google may determine that your page does not serve the query effectively — even if the writing is excellent.

Before writing or rewriting a page, analyse the first page of Google results for your target keyword. Note the dominant content format (listicle, guide, comparison, tool, definition). Match your page's format and structure to what Google already shows users for that query. Intent alignment is one of the most overlooked causes of indexing failures among technically proficient SEO professionals.

Fix 8: JavaScript Rendering Issues

If your website relies heavily on JavaScript to render core page content — and Googlebot cannot fully execute that JavaScript — it may crawl your page and see essentially blank or incomplete content. Thin content that results from a rendering failure will reliably fail the indexing quality threshold.

Test your affected URLs using the URL Inspection Tool's Live Test feature, which shows you what Googlebot actually sees when it renders the page. If critical content is missing from the rendered view, implement server-side rendering (SSR) or static generation for content that needs to be indexed. Ensure all critical text, headings, and structured data are present in the initial HTML response.

Crawled - Currently Not Indexed vs Discovered - Currently Not Indexed: Key Differences

Google Search Console surfaces two similar but fundamentally different indexing error statuses that are frequently confused with each other. Understanding the distinction is essential for diagnosing the correct root cause and applying the right fix.

|

Factor |

Crawled - Currently Not Indexed |

Discovered - Currently Not Indexed |

|

Meaning |

Google crawled page but did NOT index it |

Google found URL but has NOT crawled it yet |

|

Severity |

Higher — Google evaluated and rejected |

Lower — Google just hasn't visited yet |

|

Primary cause |

Content quality, technical issues, or intent mismatch |

Crawl budget exhaustion, low internal links, new URL |

|

Fix priority |

Fix content / technical issues first |

Improve internal links and crawl budget allocation |

|

Time to resolve |

Days to weeks after content fixes |

Can resolve faster once crawl budget improves |

|

SpeedIndex Pro helps? |

Yes — submit after fixing content issues |

Yes — direct crawl signal triggers immediate visit |

Crawled - Currently Not Indexed is the more serious status. Google evaluated your page and actively decided against indexing it. This almost always indicates a content quality, technical, or intent alignment issue that requires your direct intervention.

Discovered - Currently Not Indexed typically means Google found the URL — usually via your sitemap or an internal link — but has not yet assigned it a crawl visit. This is more likely a crawl budget or site authority issue. For newer sites and lower-authority domains, this status is common and often resolves over time as domain authority grows — or immediately if you use SpeedIndex Pro to send a direct API signal.

|

Diagnostic rule: If you see Crawled - Currently Not Indexed: fix content and technical issues first, THEN resubmit. If you see Discovered - Currently Not Indexed: improve internal linking and crawl budget first OR use SpeedIndex Pro to trigger an immediate crawl visit. |

The Complete Step-by-Step Fix Checklist for Crawled - Currently Not Indexed

Work through this checklist in order for every URL showing this status. Each step builds on the previous one. Do not skip to step seven before confirming the earlier steps are clean.

4. Open Google Search Console and navigate to Indexing > Pages > Crawled - currently not indexed

5. Click each affected URL and open the URL Inspection Tool — check the rendered HTML view for noindex tags, robots.txt blocks, and content rendering issues

6. Verify the page has no noindex meta tag in the HTML source or HTTP headers

7. Verify the page is not disallowed in your robots.txt file

8. Check the page's internal link count — if it is zero (orphan page), add at least two relevant internal links from established pages on your site

9. Evaluate the content quality against the top 5 ranking pages for your target keyword — identify gaps in depth, originality, and usefulness

10. Rewrite or significantly expand thin content — prioritise adding original insight, data, examples, or experience that competing pages lack

11. Check for duplicate content issues using a plagiarism checker and a site: search query for your main content phrases

12. Run the URL through PageSpeed Insights and fix any critical performance issues

13. Verify the page's content format matches the dominant search intent for its target keyword

14. Check for JavaScript rendering issues using URL Inspection Live Test

15. Once all fixes are in place, use SpeedIndex Pro to submit the corrected URL directly to Google's Indexing API — triggering a fresh crawl and evaluation within minutes

|

Time-saving tip: Do not work through affected URLs one by one if you have many. Group them by cause type first — all thin content pages together, all orphan pages together, all noindex issues together. Fix by batch, then submit the entire corrected batch through SpeedIndex Pro in a single bulk submission. |

When You Should NOT Fix Crawled - Currently Not Indexed

Not every URL appearing under this status requires action. Google's decision not to index certain pages is often the correct outcome, and attempting to force index those pages can actually harm your overall site quality score and crawl budget efficiency.

You should leave this status in place and take no action for the following URL types:

• Paginated archive pages beyond page 2 — Google typically indexes page 1 of a paginated archive only

• URL parameter variations of the same content — filter combinations, sorting parameters, session IDs

• XML sitemaps appearing as crawlable URLs — these are infrastructure files, not content pages

• Internal search result pages — dynamic content generated by users searching within your site

• Staging or test pages that have been inadvertently exposed — disallow these in robots.txt

• Admin, dashboard, or login pages that have no business being in search results

• Thank-you pages, order confirmation pages, and other post-conversion pages

• Thin affiliate or generated pages that add no original value to users

A common and costly mistake is to see a large number of excluded pages and instinctively try to get all of them indexed. For most established websites, having a significant percentage of URLs excluded from the index is entirely healthy. The goal is to have all of your genuinely valuable, unique, user-serving pages indexed — not to achieve a 100% indexing rate across every URL your server can generate.

|

Rule of thumb: Before working to index any excluded URL, ask yourself: would a real user find this page valuable and different from everything else on my site? If the honest answer is no, leave it excluded and focus your time on pages that genuinely deserve to rank. |

The Fastest Way to Reindex Fixed Pages: SpeedIndex Pro and Google's Official Indexing API

Once you have diagnosed the root cause, applied the appropriate content or technical fixes, and verified that the page now genuinely deserves to be in Google's index — the single biggest mistake you can make is to sit and wait for Googlebot to rediscover the updated URL organically.

Google's natural recrawl cycle is unpredictable. A page that was previously crawled and excluded may not receive a follow-up crawl visit for days or weeks — even after significant content improvements. During that waiting period, your fixed pages continue generating zero organic traffic while your competitors' indexed pages capture every search click.

SpeedIndex Pro eliminates this waiting period entirely. Our platform uses Google's official Indexing API to fire a direct crawl signal to Googlebot within seconds of your submission. Google receives the notification, Googlebot visits the updated page, re-evaluates the content against its current quality standards, and the page enters the index — all within under 2 minutes in the vast majority of cases.

The Exact Workflow: Fix and Reindex in Under 10 Minutes

16. Identify the affected URLs in Google Search Console under Crawled - Currently Not Indexed

17. Diagnose the root cause using the checklist in Section 5 of this guide

18. Apply the appropriate fix — content improvement, internal link addition, noindex removal, technical correction

19. Verify the fix is live by checking the URL in your browser and confirming the change is visible

20. Open SpeedIndex Pro, paste the corrected URL (or full batch of corrected URLs)

21. Click submit — SpeedIndex Pro fires the Google Indexing API call within seconds

22. Watch the real-time dashboard confirm acceptance — your URL is now in Googlebot's crawl queue

23. Most corrected pages complete the full re-crawl and indexing cycle in under 2 minutes

Why SpeedIndex Pro Works Where Manual GSC Requests Fall Short

Google Search Console's built-in URL Inspection Tool has a 'Request Indexing' button that many SEOs use to resubmit fixed pages. This is better than waiting passively — but it has significant limitations. The GSC request indexing feature has strict daily quotas per property. It processes one URL at a time. It can be delayed by hours or longer. And it is notorious for showing no visible indication of when — or whether — the crawl actually occurred.

SpeedIndex Pro uses Google's official Indexing API — the same underlying mechanism, but accessed directly, at scale, with real-time confirmation. You can submit hundreds of corrected URLs in a single bulk operation, see the exact API response code for every URL instantly, identify any that still have technical issues preventing indexing, and retry them with one click. There is no daily quota anxiety, no one-at-a-time bottleneck, and no uncertainty about whether the signal was received.

|

SpeedIndex Pro key advantage: Submit an entire batch of corrected URLs — blog posts, backlinks, product pages, fixed technical errors — in one operation. See real-time Google API responses for every URL. Retry failures in one click. Full submission history logged with timestamps and credit usage. |

No Google Search Console Login Required

One of the most common questions from SEO professionals managing client sites is whether using SpeedIndex Pro requires connecting Google Search Console accounts. The answer is no. SpeedIndex Pro handles all Google Indexing API authentication on its own server infrastructure. You paste the URLs. You click submit. SpeedIndex Pro does the rest. Your client's GSC property, credentials, and data stay completely private.

Preventing Crawled - Currently Not Indexed From Reoccurring

Fixing existing indexing failures is one side of the equation. Building a site architecture and content workflow that prevents this status from accumulating on new content is equally important for long-term SEO performance. Here are the structural practices that keep indexing rates high across your entire site.

Publish a Quality Threshold Before Every Post

Before any new page goes live, run it through a simple quality checklist: Does this page answer the search query better or differently than anything currently ranking? Does it contain original insight, experience, or data not available elsewhere? Is the content format aligned with the dominant intent for the target keyword? If the answer to any of these is no, the page is not ready to publish. Pages that fail this standard at publication are far more likely to accumulate this status from day one.

Build Internal Links on the Same Day You Publish

One of the most consistent patterns in indexing failures is new pages that go live with zero internal links and then receive this status within days of the first Googlebot visit. Make it a standing workflow rule to add internal links from at least two existing high-authority pages on your site on the same day you publish new content. This signals immediate importance and relevance to Googlebot on its first visit.

Submit New URLs Through SpeedIndex Pro on Publication

Do not wait for Googlebot to discover new pages organically. Build SpeedIndex Pro into your publication workflow. Every time you publish a new article, product page, or landing page, submit the URL through SpeedIndex Pro immediately. The page enters Google's index within minutes of going live, captures any early search traffic for the topic, and begins accumulating ranking signals from day one rather than day fourteen.

Audit Your GSC Excluded Pages Report Monthly

Set a recurring monthly calendar reminder to review the Crawled - Currently Not Indexed section of your Google Search Console Pages report. Triage newly appeared URLs against the cause checklist in this guide. Fix high-priority pages — commercial pages, important blog content, new backlinks — immediately. Dismiss low-priority URLs that should legitimately be excluded. Maintaining this monthly cadence prevents a small indexing problem from compounding into a major one over time.

Keep Crawl Budget Clean on Large Sites

If your site has more than a few thousand pages, crawl budget management becomes an ongoing operational responsibility. Audit your crawl stats quarterly. Identify URL patterns that generate high crawl volume but low or zero indexing rates. Systematically disallow or canonicalise low-value URL patterns before they grow to the point of consuming significant crawl budget that should be directed at your best content.

Frequently Asked Questions

How long does it take for Google to reindex a fixed page?

Without intervention, the natural recrawl cycle for a previously crawled page can take anywhere from a few days to several weeks — there is no guaranteed timeline. Using SpeedIndex Pro to submit the corrected URL directly to Google's official Indexing API compresses this to under 2 minutes in the vast majority of cases. The recrawl happens immediately after the API signal is received by Google.

Can I just click Request Indexing in Google Search Console?

Yes, and it is better than doing nothing. However, the GSC Request Indexing feature is limited to one URL at a time, carries daily property-level quotas, and provides no real-time confirmation that the crawl occurred or when Google actually evaluated the updated page. SpeedIndex Pro uses the same underlying Google Indexing API but handles bulk submissions, provides real-time API response codes for every URL, and has no one-at-a-time workflow bottleneck.

Will my page rank immediately after being indexed?

Indexing and ranking are separate processes. Getting indexed means the page is in Google's database and eligible to appear in search results. Where it actually ranks depends on content quality, backlink authority, Core Web Vitals, search intent alignment, and dozens of other ranking factors. Indexing is the prerequisite — without it, ranking is impossible. With it, the ranking process begins.

My page has been fixed for weeks but is still not indexed — what do I do?

If you have applied content and technical fixes but the page remains excluded after weeks of waiting, the most likely issues are that the fix was not substantial enough, there is still an unresolved technical barrier, or Google has not yet revisited the page after your changes. Use SpeedIndex Pro to submit the URL directly to the Indexing API — this forces an immediate fresh evaluation of the current version of the page rather than waiting indefinitely for organic recrawl.

How many pages showing this status is normal?

There is no universal benchmark, as it varies significantly by site type, size, and content strategy. For a content-heavy site with a clear topical focus and consistent publishing quality, having 5 to 15 percent of URLs excluded is broadly normal. For sites with thin content, large parameter URL sprawl, or weak domain authority, this percentage can be much higher. The priority is always fixing pages that genuinely deserve to rank — not chasing a low exclusion percentage for its own sake.

Does building backlinks help fix this status?

Backlinks help in two ways. They increase your domain authority, which improves crawl budget allocation across your entire site. And they provide additional discovery signals that can trigger Googlebot to re-evaluate previously excluded pages. However, backlinks alone will not fix a page with thin content or technical issues — those root causes need to be resolved directly. Use SpeedIndex Pro to index the backlinks you build as well, ensuring they deliver link equity immediately rather than waiting weeks for Google to discover them.

What is the difference between deindexed and crawled not indexed?

A deindexed page was previously in Google's index and has since been removed — either by a manual action, a quality algorithm update, or a noindex tag added after initial indexing. A page showing Crawled - Currently Not Indexed was crawled but never added to the index in the first place. Both conditions result in the same outcome — the page is absent from search results — but they have different diagnostic paths and different fixes.

Fix Your Indexing Issues Today — Then Submit With SpeedIndex Pro

Crawled - Currently Not Indexed is a fixable problem. Every cause listed in this guide has a specific, proven solution. The key is systematic diagnosis — working through each potential cause methodically rather than making vague improvements and hoping Google notices.

Fix the root cause first. That is non-negotiable. SpeedIndex Pro cannot index a page that Google has legitimately decided does not meet its quality standards — no indexing tool can, and any service claiming otherwise is not being honest with you.

But once your fixes are live — once your content is genuinely improved, your technical issues are resolved, and your page truly deserves to be in Google's index — do not wait weeks for Googlebot to rediscover the updated URL on its own schedule. Submit it through SpeedIndex Pro and get it evaluated within minutes.

• Submit single URLs or bulk batches of corrected pages in one operation

• See real-time Google API response codes for every URL — instant confirmation or instant diagnosis

• Retry failed submissions in one click after resolving remaining technical issues

• No Google Search Console login required — no credential sharing, no GSC property access

• Credits never expire — submit on your schedule, not a monthly billing cycle

• Free bonus credits on signup — test real submissions before purchasing

Every day a fixed page sits unindexed is a day it generates zero organic traffic, zero leads, and zero revenue. The fixes exist. The fastest reindexing method exists. Use both.

|

Get started: Visit speedindex.pro, create your free account, and claim your signup bonus credits. Submit your first fixed URL and see it enter Google's index in under 2 minutes. Stop waiting for Googlebot. Fix the issue, submit the URL, get indexed. |